Cancers are notoriously known for their high mortality rate and increasing incidence worldwide. Among them, lung cancer is arguably one of the most devastating ones. According to the World Cancer Research Fund International, lung cancer was the second most common cancer around the world in 2020, with more than 2.2 million new cases and 1.8 million deaths.

However, lung cancer, like other cancers, is easier to treat if caught earlier. “The reported 1-year survival rate for stage V is just 15% to 19% compared with 81% to 85% for stage I, which means that the early differentiation of benign and malignant pulmonary nodules, can effectively reduce mortality.” Said Xingguang Duan, a scientist from the School of Medical Technology, Beijing Institute of Technology.

Duan and his colleagues believe the early diagnosis of lung cancer is essential for timely treatment and better prognoses. To this end, they devised a novel robotic bronchoscope system that can non-intrusively access the area of interest within the lung for minimally invasive pulmonary lesions sampling, the gold standard of lung cancer diagnoses. This research was published on Mar. 15 in the journal Cyborg and Bionic Systems.

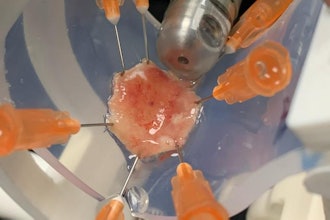

“Imagine inserting a long thin line into your mouth, through your airway to cut a little part of the lesion of interest within your lung. After that, this thin line brings the cut sample back for further examination to determine whether it’s benign or malignant.” Said study authors, “This is what the bronchoscope system was designed for. Our end effector is just like a thin line. To do so, this line must first be thin enough to cross the trachea and bronchus.”

“Moreover, it must be able to bend, rotate and translate to flexibly navigate the complex airway network.” explained Changsheng Li, the corresponding author of this research, from School of Mechatronical Engineering, Beijing Institute of Technology.

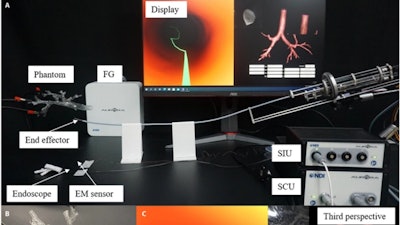

“Another problem is that you can’t see the end effector of this line through the patient’s chest. However, to accurately approach the lesion of interest, the position and pose of the end effector must be determined in real-time.” said study authors. They addressed this problem by developing a navigation system, which attaches an endoscope and two electromagnetic sensors to the end effector, which enables online positioning for navigation and provides visual information for doctors to diagnose and sample.

The navigation system also reconstructs a three-dimensional virtual model based on computed tomography for robotic path planning, provided with the choice of the target area. Combining the flexible end effector and the navigation system, the robotic bronchoscope system can automatically reach the target lesion and provide intraoperative visual guidance for biopsy sampling.

They verified the feasibility of this robotic bronchoscope system via an ex vivo navigation-assisted intervention experiment. “During the experiment, the position displayed by the third perspective of the virtual endoscope is consistent with the position of the end effector’s tip relative to the airway phantom from visual observation. The virtual endoscope view also matches with the real video acquired by the endoscope module as expected,” said Li.

“Although the system has achieved promising bronchoscopy performance, as a medical device, there are still some issues that limit the clinical application and promotion,” explained study authors, “First of all, the more convenient maneuver is required for surgeons to readily learn and use; Second, high-resolution endoscopic view is required; Finally, the calibration between virtual and actual environments is still time-consuming and unfriendly to the novice.”

In the future, they will focus on minimizing the diameter of the end effector and adopting replaceable modules for diverse surgical requirements, exploring joint control to allow the robot a more flexible movement in the airway, and adopting computer vision algorithms to realize automatic and intelligent calibration.

The authors of the paper include Xingguang Duan, Dongsheng Xie, Runtian Zhang, Xiaotian Li, Jiali Sun, Chao Qian, Xinya Song, and Changsheng Li.

The research is funded by Beijing Municipal Natural Science Foundation–Haidian Primitive Innovation Joint Fund Project, National Natural Science Foundation of China, and National Natural Science Foundation of China–Shenzhen Robotics Research Center Project.